- Research article

- Open access

- Published:

Super-sparse principal component analyses for high-throughput genomic data

BMC Bioinformatics volume 11, Article number: 296 (2010)

Abstract

Background

Principal component analysis (PCA) has gained popularity as a method for the analysis of high-dimensional genomic data. However, it is often difficult to interpret the results because the principal components are linear combinations of all variables, and the coefficients (loadings) are typically nonzero. These nonzero values also reflect poor estimation of the true vector loadings; for example, for gene expression data, biologically we expect only a portion of the genes to be expressed in any tissue, and an even smaller fraction to be involved in a particular process. Sparse PCA methods have recently been introduced for reducing the number of nonzero coefficients, but these existing methods are not satisfactory for high-dimensional data applications because they still give too many nonzero coefficients.

Results

Here we propose a new PCA method that uses two innovations to produce an extremely sparse loading vector: (i) a random-effect model on the loadings that leads to an unbounded penalty at the origin and (ii) shrinkage of the singular values obtained from the singular value decomposition of the data matrix. We develop a stable computing algorithm by modifying nonlinear iterative partial least square (NIPALS) algorithm, and illustrate the method with an analysis of the NCI cancer dataset that contains 21,225 genes.

Conclusions

The new method has better performance than several existing methods, particularly in the estimation of the loading vectors.

Background

Principal component analysis (PCA) or its equivalent singular-value decomposition (SVD) is widely used for the analysis of high-dimensional data. For such gene expression data with an enormous number of variables, PCA is a useful technique for visualization, analyses and interpretation [1–4].

Lower dimensional views of data made possible, via the PCA, often give a global picture of gene regulation that would reveal more clearly, for example, a group of genes with similar or related molecular functions or cellular states, or samples of similar or connected phenotypes, etc. PCA results might be used for clustering, but bear in mind that PCA is not simply a clustering method, as it has distinct analytical properties and utilities from the clustering methods. Simple interpretation and subsequent usage of PCA results often depends on the ability to identify subsets with nonzero loadings, but this effort is hampered by the fact that the standard PCA yields nonzero loadings on all variables. If the low-dimensional projections are relatively simple, many loadings are not statistically significant, so the nonzero values reflect the high variance of the standard method. In this paper our focus on the PCA methodology is constrained to produce sparse loadings.

Suppose X is an n × p data matrix centered across the columns, where n and p are the number of observations and the number of variables, respectively. Also, let S X = XTX/n be the sample covariance matrix of X. In PCA, the interest is to find the linear combination z k = Xv k , for k = 1, ..., p, which maximizes

with the constraints  and v

k

⊥ v

h

for all h < k. PCA can be computed through the SVD of X. Let the SVD of X be

and v

k

⊥ v

h

for all h < k. PCA can be computed through the SVD of X. Let the SVD of X be

where D is n × p matrix with (i, i )th element d i ; the columns of Z = UD = XV are the principal component scores, and the columns of the p × p matrix V are the corresponding loadings. The vector v k in (1) is the k-th column of V.

Each principal component in (2) is a linear combination of p variables, where the loadings are typically nonzero so that PCA results are often difficult to interpret. To get sparse loadings, [5] proposed to use L1-penalty, which corresponds to the least-absolute shrinkage and selection operator (LASSO; [6]). [7] proposed to use the so-called elastic-net (EN) penalty. However, LASSO and EN may not be satisfactory either, because it can still gives too many nonzero coefficients. [8] proposed the smoothly-clipped absolute deviation (SCAD) penalty for oracle variable selection. Recently, in regression setting, [9] proposed a new random-effect model using a gamma scale mixture, which gives various types of penalty, including the normal-type (bell-shaped for ridge penalty), cusped-type (LASSO and SCAD-type), and a new (singular) unbounded penalty at the origin. [9] showed that the new unbounded penalty can yield very sparse estimates that are better than LASSO both in prediction and sparsity.

In this paper we use the random-effect model approach of [9] for sparse PCA (SPCA); the model gives unbounded gains for zero loadings at the origin, so it forces many estimated coefficients to zero. We improve the estimation further by shrinking the singular values from the SVD of the data; the resulting procedure is called super-sparse PCA (SSPCA). We provide some simulation studies that indicate that these SPCA methods perform better than existing ones, and illustrate their use using a cancer gene-expression dataset with 21,225 genes. We also show how to modify the ordinary NIPALS algorithm [10] to implement these methods computationally.

Results

Numerical studies

We first perform small simulation studies in order to assess the performance of the proposed sparse PCA methods and compare them against other methods. We generate data matrix X = (X1,…,X p ) where X i ∈ Rn, as follows:

where  , e

i

~ MVN(0, ϕI

n

), I

n

is the identity matrix of order n and u and e

i

are independent for all i. This gives the true covariance matrix,

, e

i

~ MVN(0, ϕI

n

), I

n

is the identity matrix of order n and u and e

i

are independent for all i. This gives the true covariance matrix,

where  , Σ22 = ϕIp-4and J

k

is the k × k matrix of ones. Here we consider cases (n, p) = (80, 20) for n > p and (n, p) = (50, 200) for n <p. Based on 100 simulated data, we compare our new sparse PCA method using the h-likelihood (HL; See Methodology section) with the LASSO and EN penalties for both SPCA and SSPCA methods. We also tried the SCAD method but the results are very similar to LASSO, so we do not report results for SCAD.

, Σ22 = ϕIp-4and J

k

is the k × k matrix of ones. Here we consider cases (n, p) = (80, 20) for n > p and (n, p) = (50, 200) for n <p. Based on 100 simulated data, we compare our new sparse PCA method using the h-likelihood (HL; See Methodology section) with the LASSO and EN penalties for both SPCA and SSPCA methods. We also tried the SCAD method but the results are very similar to LASSO, so we do not report results for SCAD.

From the SVD of Σ we have the true first loading vector v1 = (1/2,1/2,1/2,1/2,0, ..., 0)T. Let  be the estimate of v1. To evaluate the performance in estimation of the first loading vector, following [11], we use the sine values of the angle between true loading and estimated loading as the measure of the closeness of two vectors, i.e.

be the estimate of v1. To evaluate the performance in estimation of the first loading vector, following [11], we use the sine values of the angle between true loading and estimated loading as the measure of the closeness of two vectors, i.e.

When v1 =  , dist (v1,

, dist (v1,  ) = 0.

) = 0.

The summary of estimation performance is given in Table 1. Generally SPCA methods have much better estimation than the ordinary PCA method. Among SPCA methods, the condition-number constrained SSPCA method with HL is generally the best, although the improvement over the unconstrained method is not substantial. The improvement performance of HL over LASSO and EN is small when n > p, but it is substantial when n <p and the underlying signal is not very strong ( = 0.5).

= 0.5).

To evaluate the performance in variable selection, in Table 2 we report the percentage of selecting the true model (correctly identifying all of the true zero elements), the median number of correctly estimated zeroes divided by the number of true zeroes (true negatives) and incorrect zero estimates divided by the number of true nonzeroes (false negative). Because it does not produce zeroes, the ordinary PCA method never gets the true model and always gets 0 true negatives and 0 false negatives. The HL penalty outperforms the LASSO penalty and generally better than the EN penalty, particularly in identification of the true model. LASSO identifies fewer true negatives compared to HL. The SSPCA methods with the HL and LASSO penalties outperform the corresponding SPCA methods, but here again HL is better than LASSO and EN. The EN performs worst when n <p and the underlying signal is not very strong ( = 0.5).

= 0.5).

Finally, as a measure of the prediction power, we compute the test sample variance,

where  is the estimated loading using data matrix

is the estimated loading using data matrix  and Xtest is the independent test data sets generated from (3) with same sample size n. The results are in Table 3. SPCA methods give better prediction power than the ordinary PCA. Except for the SSPCA method when n <p and the underlying signal is not very strong (

and Xtest is the independent test data sets generated from (3) with same sample size n. The results are in Table 3. SPCA methods give better prediction power than the ordinary PCA. Except for the SSPCA method when n <p and the underlying signal is not very strong ( = 0.5), six SPCA methods have similar prediction power.

= 0.5), six SPCA methods have similar prediction power.

Analysis of NCI data

In the analysis of microarray data it is often of interest to co-regulated genes, since they will point to some common involvement in molecular functions or biological processes or cellular states. PCA is a useful tool for such analyses [1–4]; since interpretation depends on comparing the relative sizes of the loading vectors, the sparse loadings in SPCA are much easier to interpret than ordinary PCA. Furthermore, the previous section also shows that SPCA has better estimation characteristics than the ordinary PCA. For illustrations we consider the so-called NCI-60 microarray data downloaded from the CellMiner program package, National Cancer Institute http://discover.nci.nih.gov/cellminer/. Only n = 59 of the 60 human cancer cell lines were used in the analysis, as one of the cell lines had missing microarray information. The cell lines consist of 9 different cancers and were used by the Developmental Therapeutics Program of the U.S. National Cancer Institute to screen > 100,000 compounds and natural products. The number of genes is p = 21,225.

Figure 1 in the Additional file 1 gives the plots of the estimates of first loading of 21,225 variables (genes) from five PCA methods, and Table 4 shows the proportion of zero coefficients (< 0.00005) of first loading vector. The ordinary PCA has almost all nonzero loadings (99%); to interpret the results, one must apply a threshold value on the coefficients, but it is not obvious how to choose the threshold. SPCA with LASSO penalty gives only 3% zero loadings, so it is not sparse; HL penalty give more sparse loadings (37.5% zeroes), but the proportion of nonzero loadings are still quite large. SSPCA with LASSO penalty is slightly improves, with 5.4% zeroes, but it is still far from sparsity with more than 20,000 nonzero loadings. Here SSPCA with HL penalty gives the most sparse loadings, with only 6% nonzero loadings, so we have managed to force almost 20,000 loadings to zero.

To select the number of principal components, we use a permutation approach as follows. First, we randomly permute the expression values within each sample (row) of X to create permuted data X

perm

. Then PCA is performed on X

perm

to get the singular values  . We perform P = 1000 permutations, from which we can compute the p-values of the observed d

k

's. The number of principal components, k0, is such that the p-value of d

k

's is less than 0.001 when k ≤ k0.

. We perform P = 1000 permutations, from which we can compute the p-values of the observed d

k

's. The number of principal components, k0, is such that the p-value of d

k

's is less than 0.001 when k ≤ k0.

For NCI data, we get k0 = 8 (eight significant principal components). The numbers of nonzero elements in the eight loading vectors (v1…v8) are given in Table 5. We also report the adjusted variance and cumulative adjusted variance as suggested by [7] to get the explained variance properly when the principal component scores are correlated. Note that the adjusted variance is equal to the variance in the ordinary PCA because the principal components of PCA are uncorrelated. Despite the sparsity, in comparison with the ordinary PCA, both of SPCA and SSPCA method give higher cumulative adjusted variance. In fact the SSPCA method gives extremely sparse results, with only 1,260, 681 and 375 nonzero loadings for the first 3 principal components, compared to 13,259, 4,086 and 15,362 for the SPCA method. Up to the third principal component the latter has only slightly larger cumulative variance.

Figure 2 in the Additional file 1 shows the scatterplot matrix of the first 3 SSPCA scores. Except for breast cancer, the different cancer types appear in recognizable clusters in the plot. This means that the sparse vector loadings capture some underlying biological differences between the cancers. To find biological explanation, Table 6 shows the Gene Ontology (GO) [12] biological processes enrichment analyses of the nonzero loadings from the first 3 principal components from SSPCA. Only the top 20 most enriched categories with P-values < 10-5 are shown. The results indicate that the greatest variation in gene expression are associated with structure development, cell proliferation and cell death (apoptosis), and cell adhesion. These processes are closely related to the hallmarks of cancer progression such as angiogenesis (development of blood vessels), abnormal cell growth and eventually metastasis (cell migration made possible by abnormally low cell adhesion).

Comparative GO analyses from the ordinary PCA are given in the Additional file 1. We use the same number of top-ranking nonzero loadings as for the SSPCA, which are 1,260, 681 and 375 for the first 3 principal components, respectively. Out of these, the number of overlapping probes between the SSPCA and PCA are 462, 194 and 60. These overlaps are substantially more (up to 8 times more) than expected under random re-arrangement. However, there is sufficiently large number of distinct probes in the two methods, so the GO analyses could be different. The P-values from the SSPCA-based GO analyses are more significant than those from the ordinary PCA; this may be due to better estimation of the loadings, so that the SSPCA has better power than the ordinary PCA in revealing biologically-important grouping of genes.

Discussions and Conclusions

PCA is one of the most important tools in multivariate statistics, where it has been used, for example, in data reduction or visualization of high-dimensional data. The emergence of ultra-high dimensional data such as in genomics, involving 10,000s of variables but with only a few samples has brought new opportunities for PCA applications. However, there are new challenges also, particularly on the interpretation of results. If we treat PCA quantities such as the loading vectors as parameter estimates, the large-p-small-n applications typically produce very noisy estimates. This is obvious since the loading vectors are a statistic derived from the sample covariance matrix, and the latter is not well estimated.

It is well known that improved estimation can come by imposing constraints, and in this case sparsity constraint is natural. As PCA scores capture some underlying biological processes, we do not expect every gene in the genome to be involved. Out of possibly 30,000 genes we can expect only a small fraction, probably less than 1,000, to be involved in a cellular process. Hence sparsity constraint can help in reducing the number of loading parameters to estimate.

Imposing statistical constraints can be achieved by applying a penalty approach as used by the ridge regression or the LASSO methods [6]. In this paper we have investigated a random-effect model approach using a gamma scale mixture, which leads to a class of penalties that includes the ridge and LASSO penalties as special cases. One significant property is that it can produce unbounded penalties on the origin, which leads to stronger constraints and more sparse estimates. From our results it seems clear that the penalty approach alone is not able to yield sufficiently sparse PCA for high-dimensional genomic data. Additionally we also need the shrinkage on the singular values of the data matrix. In simulation studies we show that the proposed methods outperform existing methods both in estimation and model selection. Hence we believe that the new SPCA methods are promising tools for high-dimensional data analyses.

For future works, it will be of interest to apply super-sparse technique in this paper to locally-linear methods of dimensionality reduction (e.g. [13]]), partial-least squares (PLS) regression and classification methods (e.g. [14]), or other high-throughput data analysis method where dimensionality reduction is used (e.g. [15]).

Methodology

NIPALS algorithm for PCA

Standard algorithms for SVD (e.g. [16]) give the PCA loadings, but if p is large and we only want to obtain a few singular vectors, the computation to obtain the whole set of singular vectors may be impractical. Furthermore, with these algorithms it is not obvious how to impose sparsity on the loadings. [10] described a NIPALS algorithm that works like a power method ([17], p.523) for obtaining the largest eigenvalue of a matrix and its associated eigenvector. The NIPALS algorithm computes only a singular vector at a time, so it is efficient if we only want to extract a few singular vectors. Also the steps are recognizable in regression terms, so the algorithm is immediately amenable to random-effect modification as needed to obtain the various SPCA methods proposed in this paper.

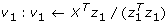

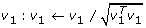

First we review the ordinary NIPALS algorithm: Set the initial value of z1 as the first column of X, then

-

1.

Find

-

2.

Normalize

-

3.

Find z 1: z 1 ← X T v 1

-

4.

Repeat steps 1 to 3 until convergence.

To obtain the second-largest singular value, first compute residual  , then apply the NIPALS algorithm above by replacing X by X2.

, then apply the NIPALS algorithm above by replacing X by X2.

Sparse PCA via random-effect models

To impose sparseness on the PCA loadings we first introduce the regression framework into step 1 of the NIPALS algorithm. Denoting X j as the j th column of X, following [18] we have

where v1jis the j th element of the p × 1 vector v1 (the first loading vector), and ∊ j is an error term. If z1 is assumed to be known, the ordinary least square (OLS) estimate for v1 is given by

Consider the penalized least-squares (PLS) estimation that minimizes

where p

λ

(·) is a penalty function. For example, p

λ

(|v1j|) = λ|v1j| gives LASSO,  gives ridge, and

gives ridge, and  gives EN, where λ, λ1 and λ2 are tuning parameters. For the prediction the ridge-type penalty is effective and for sparse estimation the LASSO-type penalty is recommended, so that EN [19] has been recommended as a compromise between the ridge and LASSO methods. [7] proposed to use EN for sparse (SPCA), but it gives less sparse estimates than LASSO.

gives EN, where λ, λ1 and λ2 are tuning parameters. For the prediction the ridge-type penalty is effective and for sparse estimation the LASSO-type penalty is recommended, so that EN [19] has been recommended as a compromise between the ridge and LASSO methods. [7] proposed to use EN for sparse (SPCA), but it gives less sparse estimates than LASSO.

[9] recently proposed the use of random-effect models to generate new penalty functions for sparse regression estimation. Suppose that v1jis a random variable such that

where θ is the dispersion parameter and u j follows the gamma distribution with a parameter w and density

such that E(u j ) = 1 and Var(u j ) = w. This model leads to a rather complex marginal distribution for v1j,characterized by parameter w and with density

This model involves a computationally difficult integral, and its direct optimization is problematic due to the nonconvexity of -log f w, θ (v1j). To overcome these problems, first note that the random-effect model (6) can be written as

where τ j = u j θ and e j ~ N(0,1). This is the double hierarchical generalized linear model [20]. With the log link, we have an additive model

This leads to the h-likelihood (HL) of [21]

where

and f θ (v1j|u j ) and f w (log u j ) are the density functions of u1j|u j and log u j , respectively. Given (w, ϕ, θ), for the estimation of v1, [9] proposed to use the profile h-likelihood

where  solves dh/du = 0.

solves dh/du = 0.

[9] showed that

with  , and the estimate of v1 can be found using the iterative weighted least squares (IWLS) by solving

, and the estimate of v1 can be found using the iterative weighted least squares (IWLS) by solving

using  and λ = ϕ/θ. In random-effect model approach, the penalty function p

λ

(|v1j|) stems from a probabilistic model

and λ = ϕ/θ. In random-effect model approach, the penalty function p

λ

(|v1j|) stems from a probabilistic model  . As noted previously the proposed penalty p

λ

(|v1j|) is nonconvex. However, by expressing the model for p

λ

(|v1j|) hierarchically as (i) v1j|u

j

is normal and (ii) u

j

is gamma, both models can be fitted by convex GLM optimizations. Thus, the proposed IWLS algorithm overcomes the difficulties of a nonconvex optimization by solving two-interlinked convex optimizations [22].

. As noted previously the proposed penalty p

λ

(|v1j|) is nonconvex. However, by expressing the model for p

λ

(|v1j|) hierarchically as (i) v1j|u

j

is normal and (ii) u

j

is gamma, both models can be fitted by convex GLM optimizations. Thus, the proposed IWLS algorithm overcomes the difficulties of a nonconvex optimization by solving two-interlinked convex optimizations [22].

Figure 1 shows HL penalties p

λ

(|v1j|) at w = 0, 2, and 30, and SCAD penalty at λ = 1. The form of the penalty changes from a quadratic shape (w = 0) for ridge regressions to a cusped form (w = 2) for LASSO and then to an unbounded form (w > 2) at the origin. In the case of w > 2, it allows an infinite gain at zero. Bell-shaped penalties have been proposed for better prediction (e.g., [23]), and cusped ones for simultaneous variable selection and estimation as in LASSO [6] or SCAD [24]. Until now, however, only finite penalties have been investigated. [9] proposed to use the unbounded penalty with w = 30, which we shall call the HL method. They illustrated the advantage of using this unbounded penalty to enhance sparse coefficient estimation. Singularities in LASSO and SCAD occur as the penalty functions have no derivatives at the origin. However, both penalties have |p

λ

(0)| < ∞ and  , while the new unbounded penalty has |p

λ

(0)| < ∞ and

, while the new unbounded penalty has |p

λ

(0)| < ∞ and  .

.

In general, the minimizer of the penalized least-squares (5) can be found using the IWLS (10) with  . The derivative

. The derivative  for LASSO, SCAD and HL penalties are summarized in Table 7. When v1j= 0, then

for LASSO, SCAD and HL penalties are summarized in Table 7. When v1j= 0, then  and the j th element of W

λ

is not defined. [9] employed a perturbed random-effect estimate

and the j th element of W

λ

is not defined. [9] employed a perturbed random-effect estimate  for a small positive δ = 10-8. Then,

for a small positive δ = 10-8. Then,  is always defined. As long as δ is small, the diagonal elements of W

λ,δ

are close to those of W

λ

and the resulting estimates are nearly identical to those of the original IWLS (10). In this paper, we report

is always defined. As long as δ is small, the diagonal elements of W

λ,δ

are close to those of W

λ

and the resulting estimates are nearly identical to those of the original IWLS (10). In this paper, we report  = 0 when

= 0 when  < 0.00005.

< 0.00005.

Other methods for sparse principal component analysis

[7] also exploit the regression property of PCA in order to obtain sparse loadings. They proposed an alternating minimization algorithm to minimize the criterion

for deriving the first sparse loading vector v1. Given θ, this optimization problem becomes a naive elastic net problem for v1. Given v1 , θ can updated from SVD of XTX v1. These two steps are repeated until v1 converges. Following [25], (11) is different from our objective function (5) even when we use the same penalty function. In fact, (5) is very close to the objective function of [26], but we put the normalization constraint of the loading inside iterated procedure so that it could make a different result. In this paper, we used the function spca() in the R-package elasticnet for the EN method in the simulation studies.

Condition-number constraint for SPCA

As shown in the previous examples, the SPCA approaches above may not produce sufficient sparsity. For the moment suppose n ≥ p; the case where n <p can be dealt with by transposing the data; see the note below. From (2) we have the eigenvalue decomposition of the sample covariance matrix as

where Λ = diag(l1, ..., l

p

and  for i = 1, ..., p is the eigenvalues of S

X

in non-increasing order (l

1

≥ … ≥ l

p

≥ 0). Let the p × 1 random vectors x1, …, x

n

be rows of X that have zero mean vector and true covariance matrix Σ with the non-increasing eigenvalues, λ1 ≥ ... ≥ λ

p

. When our goal is to estimate Σ, the sample covariance matrix S

X

can be used. Many applications require a covariance estimate that is not only invertible but also well-conditioned. An immediate problem arises when n <p, where the estimate S

X

is singular. Even when n > p, the eigen-structure tends to be systematically distorted unless p/n is small [27], resulting in ill-conditioned estimator for Σ.

for i = 1, ..., p is the eigenvalues of S

X

in non-increasing order (l

1

≥ … ≥ l

p

≥ 0). Let the p × 1 random vectors x1, …, x

n

be rows of X that have zero mean vector and true covariance matrix Σ with the non-increasing eigenvalues, λ1 ≥ ... ≥ λ

p

. When our goal is to estimate Σ, the sample covariance matrix S

X

can be used. Many applications require a covariance estimate that is not only invertible but also well-conditioned. An immediate problem arises when n <p, where the estimate S

X

is singular. Even when n > p, the eigen-structure tends to be systematically distorted unless p/n is small [27], resulting in ill-conditioned estimator for Σ.

[28] showed that the eigenvalues of S X are more dispersed than those of the true covariance matrix, i.e. l1 tends to be larger than λ1 and l p tends to be smaller than λ p . To overcome this difficulty, [29] proposed a constraint on the condition number to achieve a better covariance estimation. The optimization problem with the condition-number constraint can be formulated as

where A ≼ B denotes that B - A is positive semidefinite and t > 0. Given κmax, for t[29] proposed to use

where α ∈ {1, ... p} is the largest index such that 1/l α <t* and β ∈ {1,..., p} is the smallest index such that 1/l β > κmaxt*. Their covariance estimators are

where the eigenvalues  . To estimate the shrinkage parameter κmax, they proposed to use the K-fold cross validation.

. To estimate the shrinkage parameter κmax, they proposed to use the K-fold cross validation.

From (2) and (13), we can reconstruct X* with same singular vectors but shrunken singular values, i.e.

where D* is n × p matrix with (i, i)th diagonal element  . Thus, for condition-number constrained PCA we use X* instead of the original data matrix X. As the procedure yields extremely sparse loading vectors, we call it SSPCA, for super-sparse PCA.

. Thus, for condition-number constrained PCA we use X* instead of the original data matrix X. As the procedure yields extremely sparse loading vectors, we call it SSPCA, for super-sparse PCA.

[29] considered the estimation of covariance matrix when p is not very large. However, for large p such as over 10,000 in gene expression data, it becomes computationally too intensive. Because the aim is to obtain a few singular vectors, not whole p singular vectors, when p > n in this paper we propose to apply the above algorithm to XTand the results are transformed back appropriately.

Modified NIPALS algorithm for SPCA and SSPCA

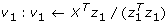

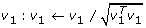

For SPCA we replace step 1 in the NIPALS algorithm by

where  is defined in (9). For SSPCA we also apply this modified step, but replace X by X* defined in (14).

is defined in (9). For SSPCA we also apply this modified step, but replace X by X* defined in (14).

Tuning parameter selection

To complete the proposed algorithm we need to estimate the tuning parameters θ and λ = ϕ/θ in (9) and (10), respectively. First we note that from (7), marginally, v1 has mean zero and variance θ, so we use  , where

, where  is the estimated first loading vector from ordinary PCA and

is the estimated first loading vector from ordinary PCA and  is the sample mean of

is the sample mean of  . We use K-fold cross-validation for λ. Following [30], we select λ which maximizes the test sample variance

. We use K-fold cross-validation for λ. Following [30], we select λ which maximizes the test sample variance

where  is the estimated loadings from the k th training sets (the whole data without the k th validation set) and SX[k]is the sample variance based on the k th validation set. For the numerical studies in Section we use K = 5.

is the estimated loadings from the k th training sets (the whole data without the k th validation set) and SX[k]is the sample variance based on the k th validation set. For the numerical studies in Section we use K = 5.

References

Alter O, Brown P, Botstein D: Singular value decomposition for genome-wide expression data processing and modeling. Proceedings of the National Academy of Science 2000, 97: 10101–10106. 10.1073/pnas.97.18.10101

Kuruvilla F, Park P, Schreiber S: Vector algebra in the analysis of genome-wide expression data. Genome Biol Epub 2002, 3(3):RESEARCH0011.1–11. 10.1186/gb-2002-3-3-research0011

Sharov A, Dudekula D, Ko M: A web-based tool for principal component and significance analysis of microarray data. Bioinformatics 2005, 21(10):2548–9. 10.1093/bioinformatics/bti343

Scholz M, Selbig J: Visualization and analysis of molecular data. Methods Mol Biol 2005, 358: 87–104. full_text

Jolliffe I, Trendafilov N, Uddin M: A modified principal component technique base on the Lasso. Journal of Computational and Graphical Statistics 2003, 12: 531–547. 10.1198/1061860032148

Tibshirani R: Regression shrinkage and selection via the LASSO. Journal of the Royal Statistical Society, series B 1996, 58: 267–288.

Zou H, Hastie T, Tibshirani R: Sparse principal components analysis. Journal of Computational and Graphical Statistics 2006, 15: 265–286. 10.1198/106186006X113430

Fan J, Li R: Variable selection via nonconcave penalized likelihood and its oracle properties. Journal American Statistical Association 2001, 96: 1348–1360. 10.1198/016214501753382273

Lee Y, Oh H: A new random-effect model for sparse variable selection. Submitted for publication

Höskuldsson A: PLS regression methods. Journal of Chemometrics 1988, 2: 211–228. 10.1002/cem.1180020306

Johnstone I, Lu A: On consistency and sparsity for principal components analysis in high dimensions. Journal of American Statistical Association 2009, 104: 682–693. 10.1198/jasa.2009.0121

Consortium GO: Gene ontology: tool for the unification of biology. Nature Genetics 2000, 25: 25–29. 10.1038/75556

Roweis S, Saul L: Nonlinear dimensionality reduction by locally linear embedding. Science 2000, 290: 2323–2326. 10.1126/science.290.5500.2323

Boulesteix A: PLS Dimension Reduction for Classification with Microarray Data. Statistical Applications in Genetics and Molecular Biology 2004, 3: 33. 10.2202/1544-6115.1075

Nueda M, Conesa A, Westerhuis J, Hoefsloot H, Smilde A, Talon M, Ferrer A: Discovering gene expression patterns in time course microarray experiments by ANOVA-SCA. Bioinformatics 2007, 23: 1792–1800. 10.1093/bioinformatics/btm251

Golub G, Reinsch C: Singular value decomposition and least squares solutions. In Handbook for Automatic Computation II: Linear Algebra. Edited by: Householder A, Bauer F. New York: Springer-Verlag; 1971.

Horn R, Johnson C: Matrix analysis. Cambridge: Cambridge university press; 1985.

Salim A, Pawitan Y, Bond K: Modelling association between two irregularly observed spatiotemporal processes by using maximum covariance analysis. Applied statistics 2005, 54: 555–573.

Zou H, Hastie T: Regularization and variable selection via the elastic net. Journal of the Royal Statistical Society, series B 2005, 67: 301–320. 10.1111/j.1467-9868.2005.00503.x

Lee Y, Nelder J: Double hierarchical generalized linear models (with discussion). Applied Statistics 2006, 55: 139–185.

Lee Y, Nelder J: Hierarchical generalized linear models (with discussion). Journal of the Royal Statistical Society, series B 1996, 58: 619–678.

Lee Y, Nelder J, Pawitan Y: Matrix analysisGeneralized Linear Models With Random Effects: Unified Analysis via H-Likelihood. London: Chapman and Hall; 2006.

Efron B, Morris C: Data analysis using Stein's estimator and its generalizations. Journal of American Statistical Association 1975, 70: 311–319. 10.2307/2285814

Fan J: Comments on "Wavelets in statistics: A review" by A. Antoniadis. Journal of Italian Statistical Association 1997, 6: 131–138. 10.1007/BF03178906

Witten D, Tibshirani R, Haste T: A penalized matrix decomposition, with application to sparse principal components and canonical correlation analysis. Biostatistics 2009, 10: 515–534. 10.1093/biostatistics/kxp008

Shen H, Huang J: Sparse principal component analysis via regularized low rank matrix approximation. Journal of Multivariate Analysis 2008, 99: 1015–1034. 10.1016/j.jmva.2007.06.007

Dempster A: Covariance selection. Biometrics 1972, 28: 157–175. 10.2307/2528966

Ledoit O, Wolf M: A well-conditioned estimator for large-dimensional covariance matrices. Journal of Multivariate Analysis 2004, 88: 365–411. 10.1016/S0047-259X(03)00096-4

Won J, Lim J, Kim S, Rajaratnam B: Maximum likelihood covariance estimation with a condition-number constraint. Submitted for publication

Parkomenko E, Tritchler D, Beyene J: Sparse canonical correlation analysis with application to genomic data integration. Statistical Applications in Genetics and Molecular Biology 2009., 8:

Acknowledgements

This research is partially funded by a grant for the Swedish Science Foundation.

Author information

Authors and Affiliations

Corresponding author

Additional information

Authors' contributions

The first two authors (DHL and WJL) contributed equally to this work. YJL and YP conceived the study and wrote the manuscript, DHL and WJL performed data analysis and wrote the manuscript. All authors read and approved the final manuscript.

Electronic supplementary material

12859_2010_3753_MOESM1_ESM.PDF

Additional file 1: The supplementary report documents details on plot of the SSPCA scores, and Gene Ontology analysis of ordinary PCA. (PDF 263 KB)

Authors’ original submitted files for images

Below are the links to the authors’ original submitted files for images.

Rights and permissions

Open Access This article is published under license to BioMed Central Ltd. This is an Open Access article is distributed under the terms of the Creative Commons Attribution License ( https://creativecommons.org/licenses/by/2.0 ), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Lee, D., Lee, W., Lee, Y. et al. Super-sparse principal component analyses for high-throughput genomic data. BMC Bioinformatics 11, 296 (2010). https://doi.org/10.1186/1471-2105-11-296

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1471-2105-11-296